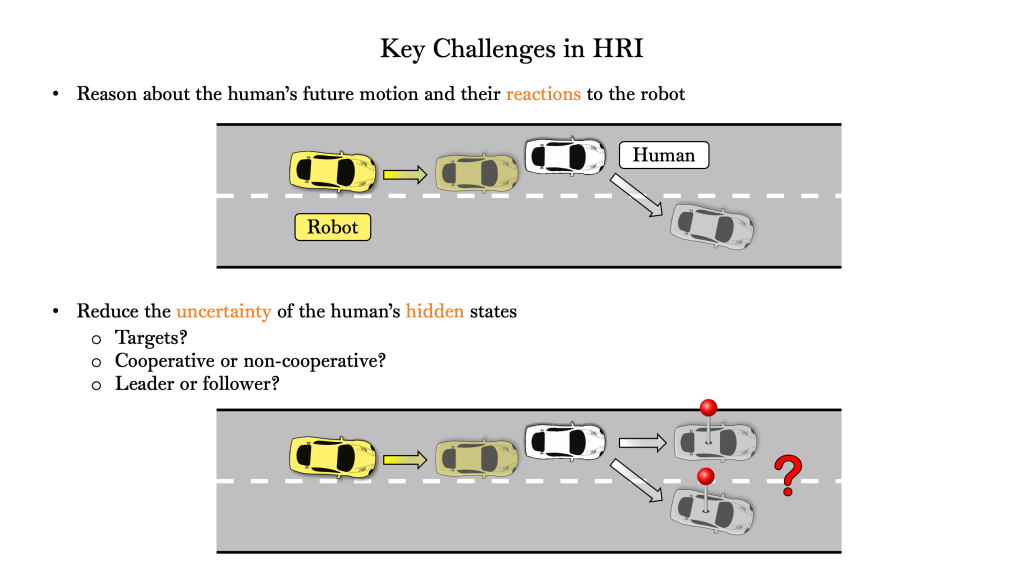

The ability to accurately predict human behavior is central to the safety and efficiency of robot autonomy in interactive settings. Unfortunately, robots often lack access to key information on which these predictions may hinge, such as people’s goals, attention, and willingness to cooperate.

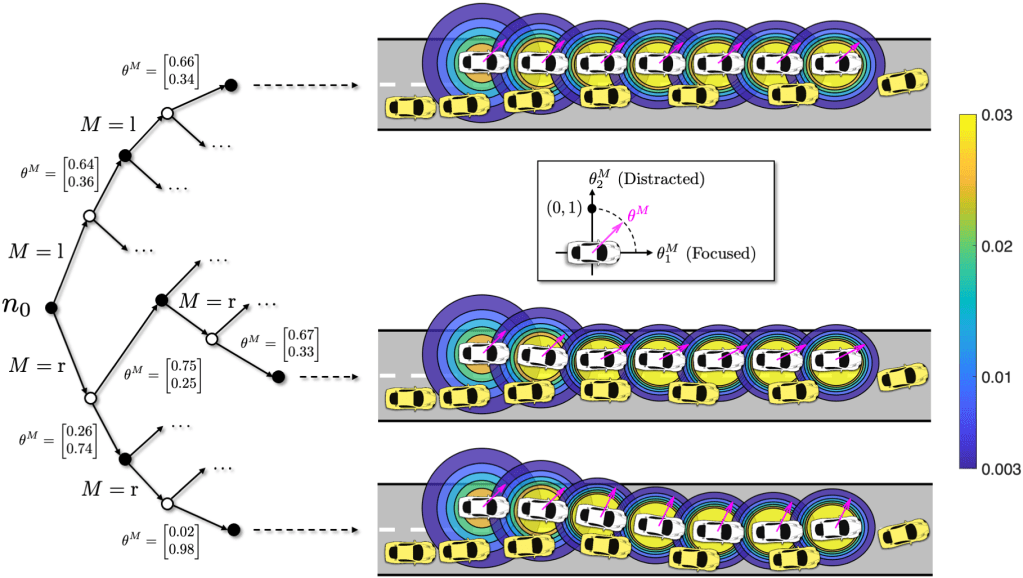

Dual control theory addresses this challenge by treating unknown parameters of a predictive model as stochastic hidden states and inferring their values at runtime using information gathered during system operation. While able to optimally and automatically trade off exploration and exploitation, dual control is computationally intractable for general interactive motion planning, mainly due to the fundamental coupling between robot trajectory optimization and human intent inference.

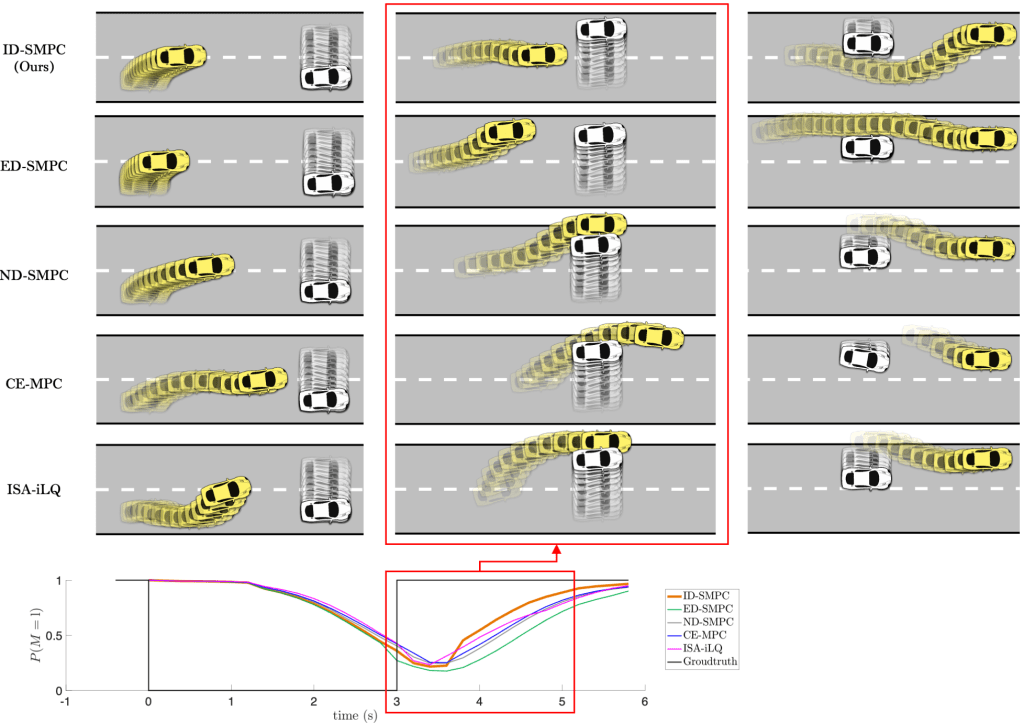

We propose a novel algorithmic approach to enable active uncertainty reduction for interactive motion planning based on the implicit dual control paradigm. Our approach relies on sampling-based approximation of stochastic dynamic programming, leading to a scenario-based stochastic model predictive control (SMPC) problem that can be readily solved by real-time gradient-based optimization methods

The resulting policy preserves the dual control effect for a broad class of predictive human models with both continuous and categorical uncertainty. We demonstrate our approach with simulated driving examples.

- Preprint is available on arXiv: https://arxiv.org/abs/2202.07720

- Code can be found in Github: https://github.com/SafeRoboticsLab/Dual_Control_HRI

- Watch our spotlight video: